If you’ve ever tried to understand how machine learning models actually learn, you’ve probably come across the term gradient descent. At first, it may sound technical or complicated, but the idea behind it is surprisingly simple and intuitive.

Exploring a career in Data and Business Analytics? Apply Now!

In machine learning, models don’t automatically know the right answers. They learn by making mistakes and improving over time. Gradient descent is the method that helps models adjust themselves step by step so they can reduce errors and make better predictions.

Understanding this concept is important because it forms the foundation of how most machine learning and deep learning models are trained.

Understanding the Core Idea in Simple Terms

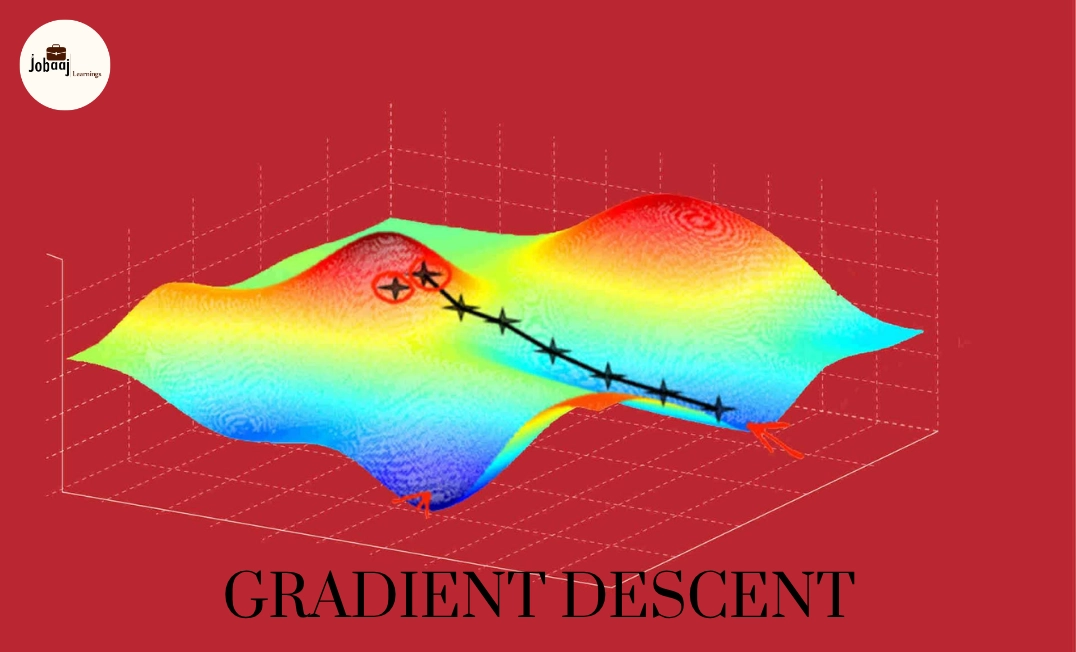

Imagine you are standing on top of a hill in complete fog, and your goal is to reach the lowest point in the valley. You cannot see the entire path, but you can feel the slope beneath your feet.

So what do you do? You take small steps in the direction that goes downward.

This is exactly how gradient descent works.

It helps a machine learning model move step by step toward the lowest error by adjusting its parameters in the right direction.

What is Gradient Descent?

θ:=θ−α∇J(θ)\theta := \theta - \alpha \nabla J(\theta)θ:=θ−α∇J(θ)

Gradient descent is an optimization algorithm used to minimize a model’s error (also called the loss function). It works by updating the model’s parameters in the direction where the error decreases the most.

In simpler words, it helps the model learn by continuously improving itself and reducing mistakes over time.

Why Gradient Descent is Important

Without gradient descent, a machine learning model would not know how to improve.

It plays a key role in:

- Training models efficiently

- Reducing prediction errors

- Finding the best possible solution

Most algorithms in machine learning, especially in deep learning, rely on this process to learn patterns from data.

How Gradient Descent Works (Step-by-Step)

Instead of jumping directly to the best solution, gradient descent follows a gradual and structured approach.

First, the model starts with random values for its parameters. At this stage, predictions are usually inaccurate.

Then, the model calculates how far its predictions are from the actual values. This difference is called the loss.

Next, it determines the direction in which the error decreases the fastest. This direction is known as the gradient.

Finally, the model updates its parameters slightly in that direction and repeats the process again and again.

Over time, these small adjustments lead the model closer to the best possible solution.

Understanding Learning Rate

One of the most important parts of gradient descent is the learning rate.

The learning rate decides how big each step should be.

If the steps are too large, the model may skip the optimal solution. If they are too small, learning becomes very slow.

So choosing the right learning rate is essential for efficient training.

Types of Gradient Descent

While the core idea remains the same, gradient descent can be applied in different ways depending on how data is processed.

1.Batch gradient descent uses the entire dataset to update parameters, which makes it stable but slower.

2.Stochastic gradient descent updates parameters using one data point at a time. It is faster but can be less stable.

3.Mini-batch gradient descent is a balance between the two, where small groups of data are used for updates. This is the most commonly used approach in real-world applications.

Real-Life Example

Let’s say you are training a model to predict house prices.

At first, the predictions may be far from the actual prices. Gradient descent helps the model adjust its internal parameters step by step so that predictions become more accurate over time.

With each iteration, the error reduces, and the model improves its understanding of the data.

Common Challenges in Gradient Descent

Even though the concept is simple, there are some challenges.

Sometimes the model may get stuck in a local minimum instead of finding the best solution. In other cases, a poor learning rate can slow down training or make it unstable.

There can also be situations where the model keeps oscillating instead of converging properly.

These challenges are usually handled by improving techniques and tuning parameters carefully.

Why You Should Understand Gradient Descent

If you are learning machine learning, gradient descent is not just another concept it is a foundation.

It helps you understand:

- How models learn from data

- Why tuning parameters matters

- How optimization works in real scenarios

Once you understand this, many other machine learning concepts become easier to grasp.

Conclusion

Gradient descent is a simple yet powerful idea that drives the learning process in machine learning models. By taking small steps in the direction of lower error, it helps models improve their predictions over time.

While the math behind it can go deeper, the core idea remains intuitive learning through gradual improvement. Mastering this concept gives you a strong base for understanding more advanced machine learning techniques.

Aspiring for a career in Data and Business Analytics? Begin your journey with a Data and Business Analytics Certificate from Jobaaj Learnings.

Categories

Categories