Data is the backbone of modern businesses, and ensuring that it is stored and organized properly is crucial. For businesses to make informed decisions, data must be consistent, accurate, and structured in a way that minimizes redundancy. This is where data normalization plays a key role. It is a technique used in database management to organize data efficiently and eliminate unnecessary duplication.

Exploring a career in Data and Business Analytics? Apply Now!

Data normalization is a fundamental concept in the design and management of relational databases. It involves the process of arranging data in such a way that redundancies are minimized, relationships are made clearer, and data consistency is maintained. In this blog, we will delve into the concept of data normalization, its significance, and how it contributes to better database management.

What is Data Normalization?

Data normalization is the process of structuring a relational database in such a way that redundancy is reduced, and the data integrity is maintained. The objective is to ensure that the data is stored in the most efficient and logical way possible.

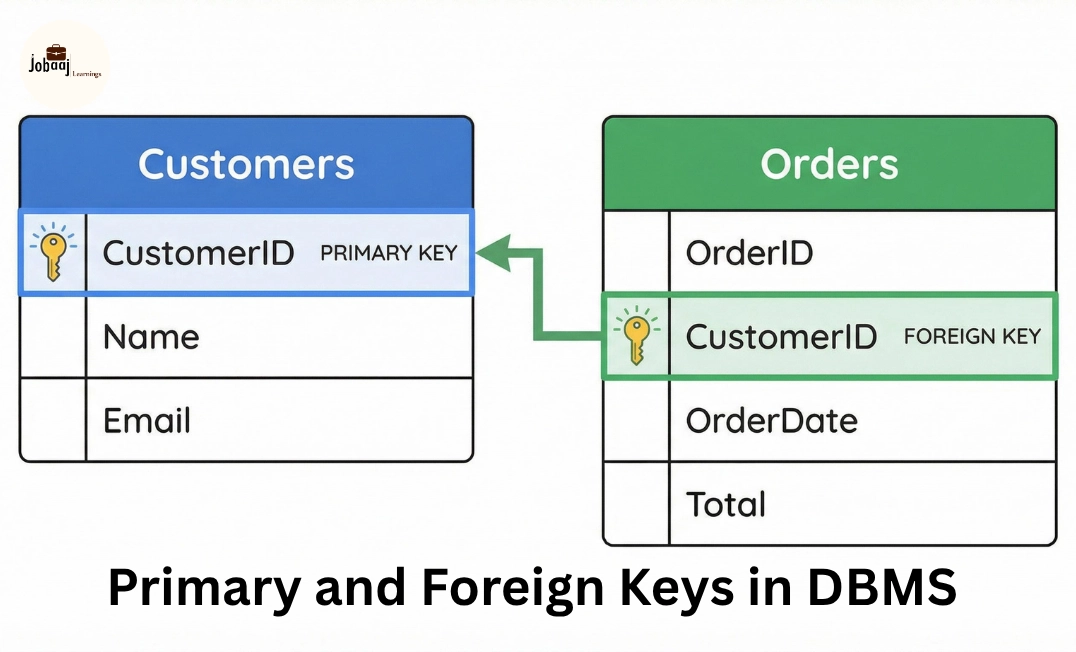

Normalization involves breaking down a database into smaller, manageable tables and ensuring that relationships between the tables are maintained through foreign keys. This reduces redundancy and ensures that data updates or deletions can be done without causing inconsistencies.

Why Normalize Data?

- Eliminate Data Redundancy: Duplicate data is minimized, which reduces storage needs and the risk of inconsistency.

- Maintain Data Integrity: By organizing data properly, the database ensures accuracy and consistency in data retrieval and modification.

- Improve Performance: Smaller, well-structured tables help in faster data retrieval and reduce processing time.

Types of Data Normalization (Normal Forms)

Data normalization is achieved in stages called normal forms. Each stage of normalization addresses a specific type of redundancy or anomaly in the data. There are several levels of normalization, but the most commonly used are:

1. First Normal Form (1NF)

- Ensure that all records are atomic (i.e., each record has unique and indivisible values).

- Rule: In 1NF, each column should contain only one value per record. There should be no repeating groups or arrays.

Example:

A table storing customer information might have columns for CustomerID, Name, and Phone Numbers. Instead of storing multiple phone numbers in a single cell (e.g., "123-456-7890, 987-654-3210"), they should be separated into distinct rows or tables.

2. Second Normal Form (2NF)

- Eliminate partial dependencies, i.e., non-key attributes should depend on the entire primary key.

- Rule: 2NF requires that all non-key attributes depend entirely on the primary key. This applies to tables with a composite primary key (a key that consists of more than one column).

Example:

In a table where both OrderID and ProductID form the primary key, any attribute like ProductName should depend only on ProductID, not on the combination of OrderID and ProductID.

3. Third Normal Form (3NF)

- Remove transitive dependencies, i.e., non-key attributes should not depend on other non-key attributes.

- Rule: 3NF requires that all non-key attributes depend directly on the primary key and not on other non-key attributes.

Example:

In a Customer table, instead of storing CustomerCountry along with CustomerCity, you should create a Country table and reference it via a foreign key to avoid redundancy.

4. Boyce-Codd Normal Form (BCNF)

- Handle situations where 3NF is satisfied, but a non-trivial dependency exists between attributes.

- Rule: BCNF requires that every determinant (an attribute that determines the value of other attributes) should be a candidate key.

Example:

If a table contains CourseID, Instructor, and InstructorDepartment, where Instructor determines InstructorDepartment, but Instructor is not a primary key, the table violates BCNF. The solution would involve breaking the data into two tables: one for Instructors and one for Departments.

Significance of Data Normalization

1. Reduces Data Redundancy

Normalization eliminates the possibility of repeating data, ensuring that each piece of information is stored in only one place. This reduces the amount of storage required and prevents issues caused by inconsistent data.

For instance, in a customer database, if the customer’s address was stored with every order they made, it would result in duplicate addresses across multiple rows. Normalization ensures that the address is stored only once and linked to the customer through a foreign key.

2. Enhances Data Integrity and Accuracy

By minimizing redundancy, normalization ensures that data modifications (inserts, updates, and deletes) only need to happen in one place. This ensures data integrity, as changes are made consistently across the database.

If an address needs to be updated, normalization means the change will only need to happen in one place rather than in multiple rows, avoiding the risk of inconsistent data across the database.

3. Improves Query Performance

A normalized database is often smaller in size and contains fewer duplicates, which can improve query performance. By breaking down the data into smaller, more focused tables, SQL queries can be executed faster and more efficiently.

It also makes joins between tables more logical and easier to manage, which helps in reducing query complexity.

4. Facilitates Better Data Maintenance

With normalized tables, you can better maintain and scale your database over time. Since data is structured logically and redundancies are eliminated, it becomes easier to manage updates, deletions, and additions.

For example, when customer details change, you don’t have to worry about inconsistencies because the data is stored in a centralized location. Additionally, as your database grows, normalized data makes it easier to manage new records.

When to Avoid Normalization

While normalization is beneficial for reducing redundancy and improving data integrity, there are certain situations where normalization may not be the best choice:

- Performance Considerations: For certain use cases (e.g., read-heavy applications), normalization may introduce complex joins that could slow down query performance. In such cases, denormalization might be considered to improve performance by sacrificing some consistency.

- Simpler Data Models: In some scenarios, especially for small-scale applications or non-relational databases, normalization might not be necessary, and a denormalized structure could suffice.

Conclusion

Data normalization is a foundational practice in database design that helps to organize data efficiently, reduce redundancy, and maintain data integrity. By structuring data in a logical manner through the application of normal forms, organizations can ensure the consistency, accuracy, and scalability of their data.

While normalization may come with performance trade-offs, especially in complex applications, its ability to streamline data maintenance and reduce anomalies makes it an essential part of relational database management. Whether you are building a small database or working on a large-scale enterprise system, understanding the significance of data normalization is crucial for building efficient and reliable systems.

Aspiring for a career in Data and Business Analytics? Begin your journey with a Data and Business Analytics Certificate from Jobaaj Learnings.

Categories

Categories